Why Agent UIs Lose Messages on Refresh

Messages disappear after refresh, reconnect, and deploys. This post covers the six common failure modes and the ordered-log pattern that fixes them.

TLDR; Messages vanish because SSE and WebSocket streams aren't durable. You'll patch it — reconnect logic, replay, dedup, REST history, client-side sorting — and end up maintaining a bespoke message broker in your frontend. The real fix: an ordered, immutable log with cursor-based tailing underneath your transport layer. You can build it on Kafka, Redis Streams, or Postgres. You can use something purpose-built. But the primitive is non-negotiable.

It starts innocently. You're building a chat UI for your AI agent. You pick a framework, wire up an SSE connection, and render messages as they stream in. The tokens flow, the cursor blinks, the demo looks great. You ship it.

It works — for one user, on one device, on a fast connection, talking to a single agent. Production is different.

A customer refreshes the page mid-stream and two messages vanish. Another customer opens the same session in a second tab and sees events in a different order. A third reports that tool results appear before the tool call that triggered them. Your support queue fills with bugs you can't reproduce locally, because locally you have one browser, one tab, and localhost latency.

You start fixing. A reconnect handler here. A dedup check there. A REST endpoint for history. A client-side sort. Each patch is small and reasonable. Each one introduces a new class of edge case. Six months in, you're not building a chat UI anymore — you're maintaining a bespoke message broker that you never meant to write.

These are not edge cases. They are the default outcome of every architecture that treats real-time streaming and durable history as separate problems. They surface only when real users on real networks do what real users do: close laptops, switch tabs, ride elevators, refresh pages.

This post walks through six failure modes we hit in our own agent UIs, explains why the fixes compound into a message broker you never meant to build, and surveys the options for getting the primitive right — from Kafka to Postgres to purpose-built tools.

Why do messages disappear after reconnect?

Because the stream isn't durable — and the problem is everywhere. A user on the OpenAI forums reported that "messages that were previously written and appeared to be finalized can disappear upon exiting and re-entering the chat." Another was writing a book with ChatGPT and found that "chapters 3 to 12 had vanished." On GitHub, a BotFramework-WebChat issue tracked how chat history vanishes after page refresh despite reconnection logic — the core team acknowledged they needed to "re-architect Web Chat to support this feature without hacks."

The failure is silent. The WiFi drops for 30 seconds — train, elevator, flaky office network. Your EventSource reconnects automatically (or your WebSocket reconnect handler fires). No error message, no indication that anything went wrong. But the events the agent emitted during those 30 seconds are just gone. The user doesn't know anything is missing. They act on incomplete information.

If you're lucky, your backend stores conversation state and you can tell the user to refresh. If the user is running a local tool or the backend doesn't persist mid-stream events, those messages are gone forever.

The common fix: Store events server-side and replay them when the client reconnects.

Why it still fails: SSE's built-in resume mechanism — Last-Event-ID — is optional, and most server implementations don't set it. Even when they do, the ID reflects the last event the server sent, not the last event the client rendered. If the server replays from "where the client should be," you either miss events (the client disconnected slightly before the server's checkpoint) or duplicate events (the client had already rendered a few events past the checkpoint). Making SSE truly resumable requires cursor tracking that lives outside the transport layer.

The root cause: the client's consumption cursor and the server's emission cursor are not the same thing, and most architectures silently conflate them.

Why do duplicate messages appear after reconnect?

The mirror of gaps — and just as widespread. A Vercel AI SDK issue reports "the tool use is displayed twice" when using useChat with addToolResult, with multiple developers confirming "similar weird behaviours." On Open WebUI, streaming responses from thinking models appeared three times in the chat. A STOMP.js bug shows how "subscriptions with the same ID as previous subscriptions are recreated on reconnection" — every reconnect doubles the message delivery.

The client reconnects and the server replays events "from the last known position." But the client had already rendered some of those events before the disconnect. Now the same message appears twice — or three times.

Deduplication sounds easy until you realize:

- The SSE spec's

Last-Event-IDheader promises resumable streaming, but it only replays from the server's last-sent ID — not the client's last-processed ID. And many servers never setid:fields at all. - WebSocket has no built-in resume or replay semantics whatsoever. EventSource at least tries; WebSocket doesn't even pretend.

- If your events are idempotent text chunks (

"Drafting...","Drafting..."), there is no natural key to deduplicate on. - Client-side dedup with a Set of IDs works until the page refreshes and the Set is gone.

The real problem: Neither SSE nor WebSocket guarantees exactly-once delivery. Teams bolt on deduplication after the fact, so every consumer must implement its own dedup logic. In a multi-agent system with multiple concurrent producers, the complexity explodes. This is the core of the SSE vs WebSocket debate for AI streaming: neither transport solves the problem, because the problem isn't the transport — it's the lack of an ordered log underneath it.

SSE vs WebSocket for AI streaming: which should you use?

| SSE (EventSource) | WebSocket | |

|---|---|---|

| Resume on disconnect | Last-Event-ID header (optional; most servers skip it) | None — you build your own |

| Auto-reconnect | Built-in | Manual |

| Ordering | In-order within one connection | In-order within one connection |

| Catch-up after gap | Not supported — missed events are gone | Not supported — missed events are gone |

| Dedup | None | None |

Bottom line: Neither is durable. Both are transport layers. The fix isn't picking the right transport — it's putting a durable, ordered log underneath either one and tailing from a cursor.

Why do tool results appear without their tool calls?

A LobeChat issue captures this perfectly: "When any tool is invoked in the previous conversation round, the user message and assistant message of the current round both disappear instantly after the AI reply completes." The messages come back on refresh — but only sometimes. A separate Vercel AI Chatbot issue found that useChat tool invocations don't render at all in saved conversations because the database stores a generic tool-invocation type while the UI checks for specific types like tool-getWeather. Users who resume a session after a tab switch or refresh see a broken conversation. The tool ran. The result exists. But the user sees nothing, or sees a result with no preceding call.

Agent calls a tool. The tool result comes back. The UI renders them. But what if the tool call was event #47 and the tool result was event #52, and the client only received events starting from #50 after a reconnect?

The user sees a tool result with no preceding tool call. The UI either crashes (if it expects the call to exist in state), renders a dangling result (confusing), or silently drops it (data loss).

The problem compounds with multi-agent handoffs. Agent A calls a tool, hands off to Agent B, and Agent B references the tool result. If the client missed the handoff event, Agent B's messages appear to come from nowhere.

The common fix: Embed parent references in each event. Fetch missing parents on demand.

Why it still fails: Now you have N+1 fetch waterfalls on reconnect. Latency spikes. The UI jitters as it lazily loads missing context. And if the parent-fetch endpoint has different consistency guarantees than the streaming endpoint (which it usually does, because one is an HTTP GET and the other is a live stream), you get stale parent data that contradicts the child event.

Why do multi-agent messages arrive out of order?

Ordering is already fragile with a single agent — a Vercel AI SDK issue reports "most of the time I get some out of order responses, which also messes up my markdown formatter," and a react-use-websocket issue found that "data can appear jumbled, missed, or dropped even though the data arrives in order at the website level."

With multiple agents it's not a bug — it's a certainty. Agent A emits event at T=100ms. Agent B emits event at T=101ms. Each agent is a separate process with its own connection. Network jitter delivers Agent B's event first. Agent B's review references Agent A's analysis. The user sees the reference before the thing it references. The conversation reads like nonsense.

The common fix: Sort by timestamp on the client.

Why it still fails:

- Clock skew between agent processes (even 1ms matters for ordering).

- You can't sort a live stream—you'd have to buffer, wait for a "safe" window, then emit, which adds latency and complexity.

- Client-side sort means the UI must maintain a sorted buffer and re-render on every insert, which trashes performance with high-throughput agents.

The real problem: Multiple producers are writing to a single logical session without a total ordering. Timestamps are not a total order. You need a sequence number assigned by a single authority.

Why do different tabs show different messages?

User opens the same session in two browser tabs. Both tabs open their own SSE connection. Both tabs start receiving events from "now."

But Tab 1 opened 30 seconds before Tab 2. Tab 1 has 30 seconds of history that Tab 2 doesn't. The tabs show different content for the same session.

The common fix: On tab open, fetch full history via REST, then switch to streaming.

Why it still fails: There's a race between "history fetch completed" and "streaming connection starts receiving." Events emitted during that window are either missed (gap) or duplicated (if you overlap the fetch and stream ranges).

The root cause is the exact same problem as reconnect, triggered by a different user action. And it proves the issue is architectural, not incidental.

Why does every deploy break all active sessions?

All of the above failure modes assume the user's network is the problem. This one is on you. You push a deploy. Your server instances restart. Every WebSocket and SSE connection drops simultaneously. Every connected user hits gaps, duplicates, or both — at the same time.

This isn't hypothetical. It's every deploy. If you deploy multiple times a day—and you should—you trigger a mass reconnection storm every time. Every user on your product reconnecting at once, all requesting replay, all hitting your history endpoint in a thundering herd.

The common fix: Rolling deploys with graceful shutdown. Drain connections before killing the old instance.

Why it still fails: Graceful shutdown helps with clean disconnects — the server can send a "goodbye" frame and the client can reconnect immediately. But the fundamental problem remains: after reconnect, the client needs to catch up from wherever it left off. If your architecture doesn't support cursor-based resumption, a graceful shutdown just gives you a polite version of the same gaps and duplicates. And if your deploy takes longer than your drain timeout, you're back to hard kills anyway.

The real insult: this failure mode is correlated. Network blips hit users randomly. Deploys hit everyone at once. Your monitoring dashboard lights up, your error rates spike, and your support queue fills with the same bug report from fifty different customers — all because you shipped a one-line CSS fix.

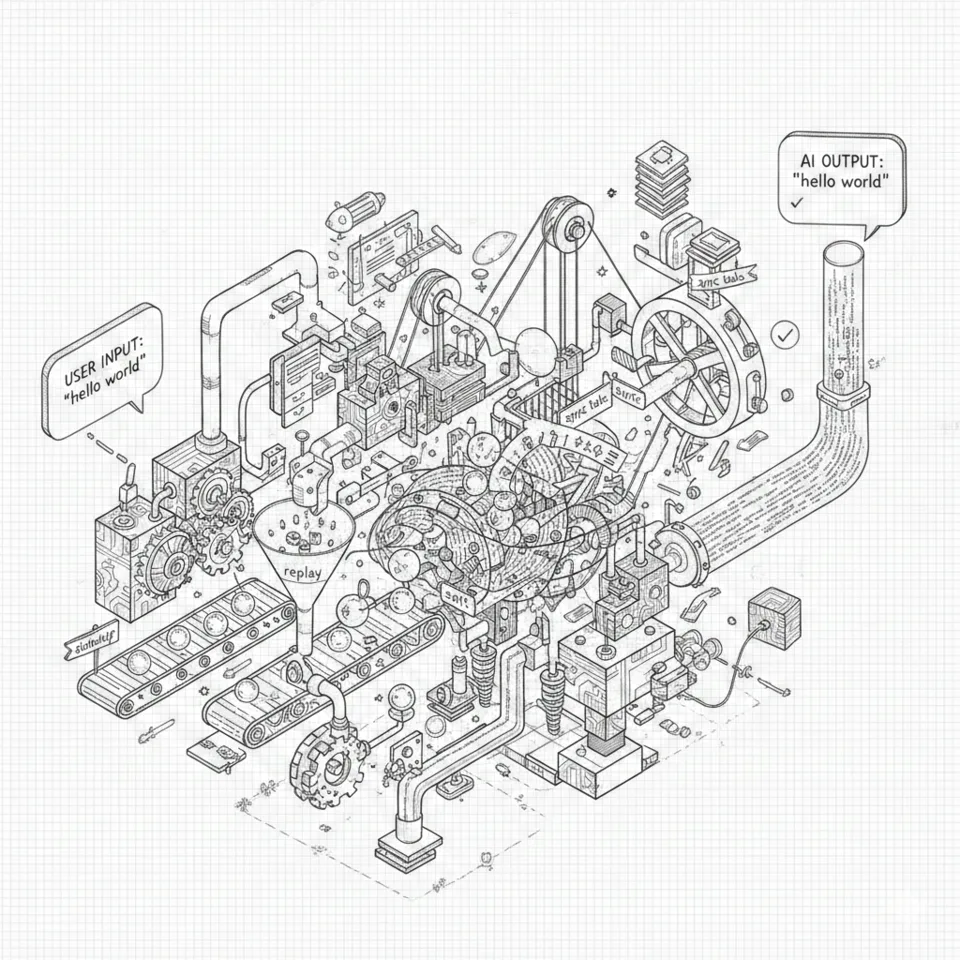

You're building a message broker

Every team walks the same path. We know because we walked it ourselves.

You start with onmessage → render. Simple. Then users report gaps, so you add reconnect logic. Then duplicates, so you add replay. Then replay causes duplicates, so you add dedup with a client-side Set. Then the page refreshes and the Set is gone, so you add a REST endpoint for history. Then history and live events arrive out of order, so you add client-side sorting. Then tool results arrive without their parent calls, so you add a parent-fetch mechanism. Then users open two tabs and see different state, so you add cross-tab coordination via BroadcastChannel.

Each fix is small. Each fix is reasonable. Each fix passes code review.

But zoom out. You've reimplemented consumer groups. Offset tracking. Exactly-once delivery. Total ordering. In your React app.

These are features of a message broker. Kafka has spent over a decade getting them right, with dedicated teams at every major tech company. You reimplemented them in a useEffect hook — without the durability guarantees, backpressure mechanisms, or battle-testing that come from running at scale for years.

It's sneaky because single-agent chat hides the problem completely. One producer, one consumer, one connection. The transport is the source of truth — events arrive in order, there's nothing to deduplicate, and reconnect is just "start from the top." The architecture works fine because you're not building a distributed system. You're building a pipe.

The moment you add a second agent, you have multiple producers. The moment you add reconnect, you have at-least-once delivery. The moment you open a second tab, you have multiple consumers. The moment you add a REST history endpoint alongside a live stream, you have two sources of truth that can diverge. You didn't mean to build a distributed system. But a multi-agent chat UI is a distributed system — multiple producers, multiple consumers, unreliable networks, concurrent state mutations — and every fix that treats it as something simpler is addressing a symptom, not the disease.

Each fix also interacts with the others. Dedup logic that assumes events arrive in order breaks when your client-side sort reorders them. Parent-fetch that works fine on initial load fails when the parent was delivered via BroadcastChannel from another tab. The complexity isn't additive — it's multiplicative. And the bugs surface only at 2 AM, on a spotty mobile connection, when a user switches tabs during a multi-agent handoff.

This isn't theoretical. ElectricSQL built Durable Streams after "seeing teams reinvent this for AI token streaming." The pydantic-ai-stream team built the same thing on Redis Streams because they "kept rewriting the same infrastructure to show agents to users." Letta explicitly requested a conversation-scoped cursor stream as a product feature. LibreChat tried it with Redis Streams and still got gaps when subscribers connected late.

Everyone arrives at the same primitive. The question is how many months of patches it takes to recognize it.

The fix: one read path, not two

Every architecture above has two read paths: a REST endpoint for history and a live stream for new events. All six bugs live in the seam between them. The fix is to collapse both into one.

Every event in a session is appended to an ordered, immutable log. Every consumer reads from that log using a cursor that it owns. Catch-up and live streaming are the same operation — the cursor is the only variable.

A single sequencer assigns monotonically increasing sequence numbers on write. On read, the client sends its cursor — the last sequence number it successfully processed — and the server streams everything after it. If the cursor is behind the head of the log, the client catches up. If it's at the head, the client live-tails. Same code, same semantics, no seam.

Gaps disappear because the cursor is exact — the server replays from the right position. Duplicates disappear because there's no overlap between catch-up and live. Ordering is structural because the log defines the total order. Tool-call/result pairing is preserved because both live in the same sequence. Multi-tab is just two cursors on the same log. Deploys are survivable because every client resumes from its own cursor.

This isn't a new idea. Kafka has offsets. Postgres has LSNs. Redis Streams has XREAD. The pattern has existed for decades in infrastructure. The insight isn't the cursor — it's recognizing that your chat UI needs the same primitive that every durable message system already provides, and that the transport layer (SSE, WebSocket) is not where durability should live.

"I'll just use Kafka"

You might be reading this and thinking: "OK, I need an ordered log with cursor-based reads. I'll spin up Kafka. Or Redis Streams. Or a Postgres table with a sequence."

You can. But the ordered log is the foundation, not the complete solution. Here's what you still need to build on top.

Session-level semantics

Kafka thinks in topics and partitions, not sessions. A topic-per-session creates partition explosion at scale. A shared topic means you route events to the right partition yourself, and consumers need to filter by session ID. Redis Streams thinks in stream keys — closer, but you manage lifecycle, TTLs, and memory pressure yourself. Postgres gives you tables, but "subscribe to new rows in real time" isn't something it does natively — you're bolting LISTEN/NOTIFY onto a polling loop and hoping nothing falls through the crack.

Catch-up and live tail as one read path

This is the thing that sounds easy and isn't. Most implementations end up with a "query history" codepath and a "subscribe to new events" codepath — the exact two-path problem you were trying to solve. Kafka's consumer API can seek to an offset and poll forward, which is close. But integrating that with SSE or WebSocket delivery to a browser client is another layer of engineering. Redis XREAD with BLOCK handles this more naturally, but only within a single Redis node.

Write ordering under concurrency

Multiple agents appending to the same session need a single authority assigning sequence numbers. Kafka gives you this per-partition, but now you're managing the session-to-partition mapping. Postgres sequences work, but you need advisory locks or serializable isolation to prevent gaps under concurrent inserts. Redis XADD auto-generates IDs, but the IDs are timestamp-based — not strictly monotonic under concurrent writes from distributed clients.

Typed event schema

Tool calls, tool results, agent messages, status updates, handoffs — these aren't opaque byte payloads. The log needs a schema that supports filtering, parent-child relationships (which tool call does this result belong to?), and progressive rendering (streaming deltas vs. committed events). With Kafka, you'd layer Avro or Protobuf on top. With Redis or Postgres, you define and enforce this yourself.

Horizontal scaling without sticky sessions

If your streaming server holds in-memory cursor state or local WebSocket connections, you need sticky routing. If you want real scale-out, session state must live externally. Kafka handles this well (partition-based distribution), but it comes with operational complexity. Redis Cluster adds consistency caveats. Postgres-backed solutions struggle here because LISTEN/NOTIFY doesn't cross nodes.

Operational overhead

Kafka is battle-tested, but notoriously ops-heavy: partition rebalancing, consumer group lag, retention policies, KRaft migration, disk management. Redis Streams is lighter but ephemeral by default — if the node restarts without RDB/AOF configured, your log is gone. Postgres is familiar and probably already in your stack, but it wasn't designed for high-throughput streaming fanout.

None of this is insurmountable. But it's not a weekend project either. It's the work that turns "I need an ordered log" into "I'm staffing a team to maintain streaming infrastructure" — and that's the transition most teams don't budget for.

What's available off the shelf

| Kafka | Redis Streams | Postgres | S2 | Starcite | |

|---|---|---|---|---|---|

| Ordered log | Per partition | Per stream key | Via sequences | Per stream | Per session |

| Cursor-based resume | Consumer offsets | XREAD + ID | DIY | Stream offsets | Built-in |

| Catch-up + live as one path | Seek + poll loop | XREAD BLOCK | Query + LISTEN/NOTIFY | Read + subscribe | Single endpoint |

| Session-level semantics | DIY | DIY | DIY | DIY | Native |

| Typed AI event schema | DIY (Avro/Protobuf) | DIY | DIY | DIY | Native |

| Multi-agent attribution | DIY | DIY | DIY | DIY | Native |

| Horizontal scaling | Partition rebalancing | Cluster mode | Limited | Managed | Managed |

| Ops burden | High | Medium | Low–Medium | Low | None (managed) |

Kafka, Redis Streams, and Postgres are general-purpose infrastructure. They give you an ordered log. S2 is a cloud-native streaming store that handles the log and scaling but leaves session semantics to you. Starcite is purpose-built for AI sessions: ordered events, cursor-based tailing, typed event schema, multi-agent attribution, zero ops.

The right choice depends on your team and your constraints. If you already run Kafka and have the engineering capacity to build the session layer, you can. If you want the primitive without the infrastructure, reach for something purpose-built.

What's the bottom line?

If you're building a multi-agent UI where users refresh, reconnect, switch tabs, or switch devices, you will hit every failure mode in this post. Not if — when.

The fix is not more reconnect logic. Not client-side dedup. Not timestamp sorting. It's recognizing that a multi-agent chat UI is a distributed system, and reaching for the primitive that distributed systems have used for decades: a durable, ordered log with cursor-based consumption.

You can build it on Kafka. You can build it on Redis Streams. You can build it on Postgres. Or you can use something that already handles the session semantics, event schema, and operational overhead for you. But the primitive itself is non-negotiable.

This is the problem starcite.ai solves.

starcite is a durable session log for AI apps: ordered events + cursor replay. Three API calls. Zero lost messages.

Book demo